16 Jan Computer-Generated Holography

This is the final instalment in the series discussing what holography is (and isn’t!).

In my previous post “The Inception of Holography”, we realised that traditional holograms are very much like photos – once recorded, they preserve the 3D nature of the object. And just like photos, they do not change in time.

However, in order for holograms to be useful in a television or computer monitor, we need moving images. This is precisely where Computer-Generated Holograms come into play. In this post, we will deep dive into these fascinating types of holograms – which are calculated by computers and displayed on a special device.

Hologram “Engineers” What You See

Imagine looking at the object – a flower in its pot.

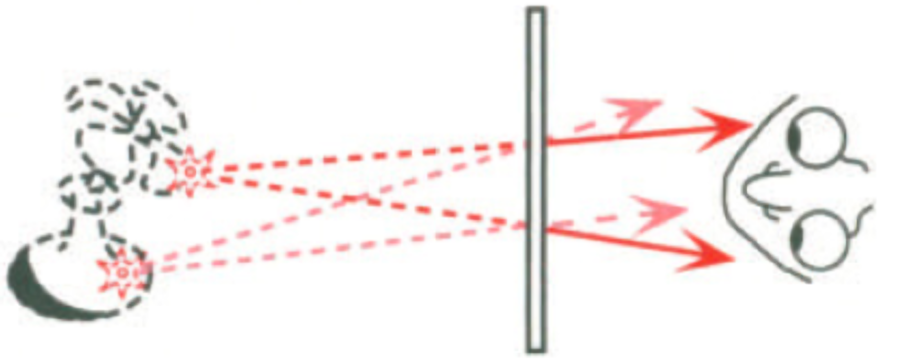

What you see is NOT actually the flower. Instead, you see THE LIGHT that interacted with it and somehow ended up in your eyes. As it happens, holograms are capable of reconstructing the same paths of light as the real object would. Those paths are also called wavefronts amongst physicists. Hence this technique earned the name of wavefront reconstruction. For a more detailed explanation, see my “Inception of Holography” post.

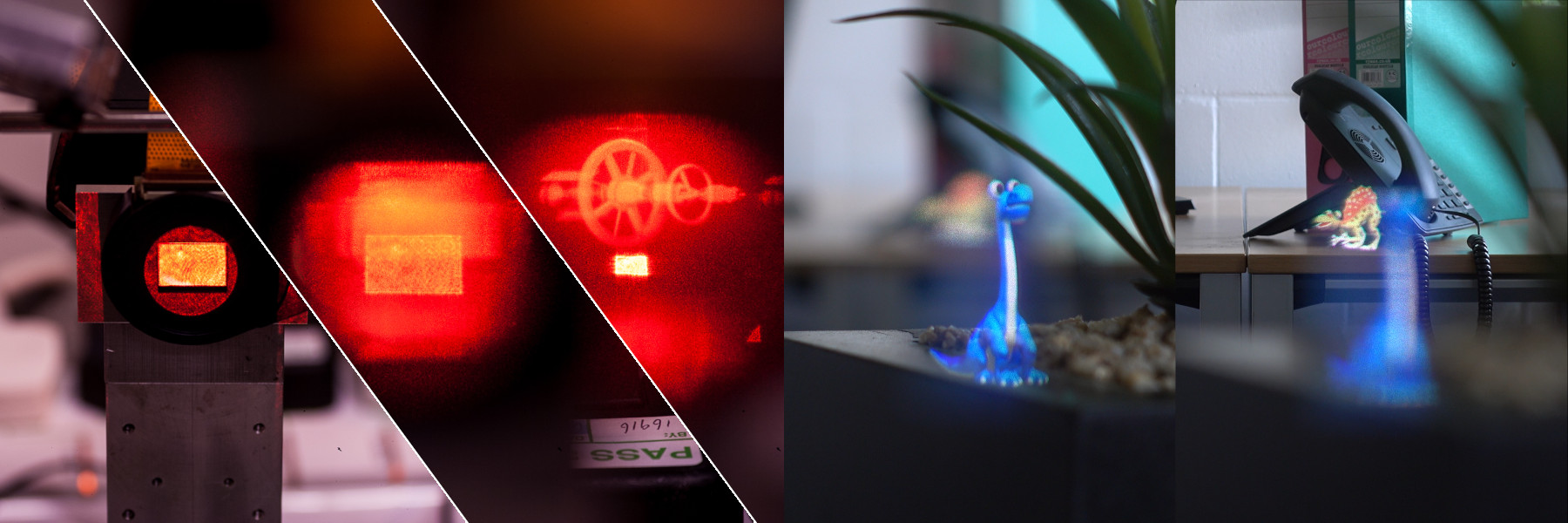

Drawings, diagrams, and text only show certain aspects of the full story, let’s take a look at

Real time Computer-Generated Holograms!

In 2019, VividQ created its Mixed Reality demo. It’s polished, pretty and impressive. However, the video I prefer to use when I explain holography is much more basic (and pre-dates VividQ). Since 2015, I haven’t found (nor created) anything that explains the principles of holography and diffraction any better.

What has just happened?

What you have just witnessed in the video is the purest form of diffraction. The bright red rectangle at the beginning is the light-shaping device, illuminated by the laser. Despite the device being really small, it has more than a thousand by a thousand little modulating elements (pixels). Each of those pixels acts as a small aperture which can be open or closed like a tiny window.

An open window lets the light through and a closed one blocks it. With the window being really small, the light while passing through it, displays its wavy nature – it acts as a light bulb spreading in all directions.

Some of you may find parallels with one of my previous posts, “Let there be light” where I explained the famous Young’s Slits Experiment. We can think of Computer-Generated Holograms as an array of VERY MANY slits in two dimensions. Mixing of all those waves is called

Diffraction and Interference

Below, you can see how it looks when you’re very close to the hologram:

Whenever a bunch of waves meet at the high point, we call it “constructive interference”. The reverse happens if half of the waves are in their peak position while the other half are on the trough. That means “destructive interference” and no light at that point.

Since we have a lot of little windows to open and close, you can imagine that it can lead to creating pretty complicated shapes.

Having said that, let’s look at what happens to a similar hologram that we previously displayed at the modulator to create our space station.

To do that realistically, I’ve written a piece of code which simulates the behaviour of light by essentially looking at every single light wave. Then, I ran this beast of a simulation for about a month to create the video below (fingers crossed I don’t end up with a hefty electricity bill!)

In Summary

1. CGH is calculated digitally and displayed on the light modulating device

‘Holograms’ as we know them on our credit cards and museums are very much like photographs. Once recorded, they don’t change. Computer-Generated Holograms are like monitors – we can choose to display any content we desire. However, there is one crucial difference. Namely, calculation of holograms is a challenging computational task

When looking at your TV, mobile phone, or a screen of your smart fridge, your eyes focus on the surface of the display, which contains an array of radiating elements, like little Light Emitting Diodes. Those elements give off light in all the directions.

In contrast, when you look at a hologram, you gaze into the light. This light then appears as if it came from the object, which could be behind, or in front of a display.

In order to display that hologram, you need to understand the behaviour of light. Basically, every frame, the computer asks itself a question of ‘how shall I open and close those million windows in such a way that the resulting effect of a million waves forms the image of a dinosaur’. As you can probably imagine, it is a non-trivial question to answer. In the 1990s, MIT essentially built a custom-designed supercomputer to be able to handle the processing.

2. Real-time holography became possible only recently

For a very long time, holography has been unattainable to an average human. This is because the cost of computation was so great that only researchers with supercomputers or computational clusters could achieve anything close to real-time performance. Real-time means that the display is capable of updating fast enough for people to perceive smooth movement, ~15 frames per second is a bare minimum, ~30 fps and beyond is what every commercial display does these days.

Personally, when I started my PhD, I remember running the code for a day to give me a single frame of a hologram. By my second year, I was running a frame every 5 seconds. Only when I learnt CUDA (a massively-parallel programming language that executed on Graphics-Processing Units), I broke the real-time barrier (link to the original publication). And I may have been one of the first people in the world to do that!

3. What’s next? Well, the true 3D display – of course

It has been a dream of generations of researchers and scientists. The same way as it has been my dream and my personal mission to introduce holographic displays to the world. Holography offers plenty of benefits to the world of displays – the three-dimensional image is only one of many. Displays are all around us and every display would benefit from escaping a flat screen and becoming holographic.